Areas We Cover

Categories

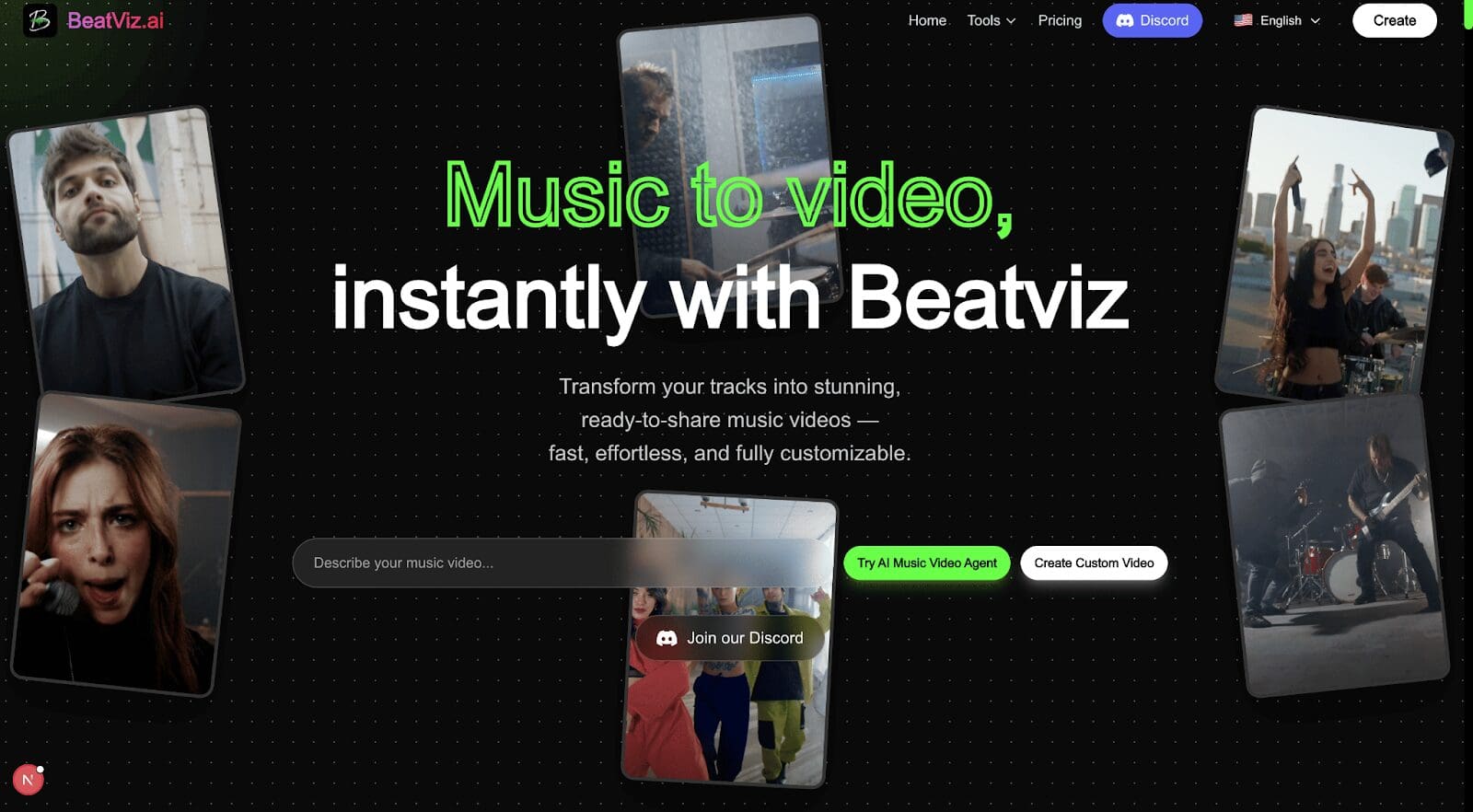

HOW BEATVIZ IS REDEFINING THE AI MUSIC VIDEO GENERATOR FOR FILM AND STAGE ARTISTS

by Lamont Washington | February 1, 2026

in Extras, Virtual

For decades, music videos have been a meeting point between sound and image—a space where rhythm becomes motion and emotion takes visual form. From experimental cinema to theatrical performances, artists have long relied on visual storytelling to deepen the audience’s connection to music. Today, as artificial intelligence enters the creative process, a new question emerges: can an AI music video generator truly serve artistic expression rather than replace it?

Beatviz offers an intriguing answer. Rather than treating AI as a shortcut or novelty, Beatviz reframes the AI music video generator as a creative partner—one that helps filmmakers, stage artists, and musicians visualize rhythm, structure emotion, and experiment with new forms of storytelling.

The Long Relationship Between Music and Visual Narrative

Music has always shaped visual art. In film, a score guides pacing, tension, and emotional payoff. In theater, rhythm influences movement, lighting, and scene transitions. Even before digital tools existed, artists sought ways to “see” music—through choreography, montage, or abstract imagery.

Traditional music video production, however, is time-consuming and resource-heavy. Directors must interpret sound subjectively, relying on instinct and collaboration to translate rhythm into visuals. While this process can be powerful, it also limits experimentation. Many ideas never reach the screen simply because they are too expensive or complex to prototype.

This is where the modern AI music video generator enters the conversation—not as a replacement for creativity, but as a way to expand it.

From Automation to Interpretation

Many AI music video generators focus on automation: upload a track, select a style, and receive a finished video within minutes. While efficient, this approach often reduces music to a background input rather than a narrative force.

Beatviz takes a different path. Instead of starting with visual templates, it begins with the music itself—analyzing rhythm, tempo changes, intensity, and structural patterns. The result is not a generic video overlay, but a visual interpretation shaped by the music’s internal logic.

For film and stage artists, this distinction matters. Rhythm is not just timing; it is meaning. A sudden silence, a slow build, or an aggressive beat drop can signal emotional shifts in a story. By visualizing these musical moments, Beatviz allows creators to explore how sound drives narrative before committing to final production choices.

A Tool for Storyboarding and Concept Development

One of the most compelling uses of an AI music video generator like Beatviz is in early-stage creative development. Filmmakers and theater directors often rely on mood boards, animatics, or rough edits to communicate ideas. These tools, while useful, are largely static.

Beatviz introduces movement into this phase. Artists can generate dynamic visual responses to music and use them as living storyboards—testing how rhythm interacts with pacing, lighting, or emotional tone. For a stage production, this might influence choreography or scene transitions. For film, it could shape editing rhythms or montage structures.

Rather than replacing human decision-making, the AI acts as a sketchbook in motion—fast, flexible, and open to revision.

Reimagining Music Videos as Performance Art

Music videos have increasingly blurred the line between cinema and performance art. Many contemporary videos borrow from theater, dance, and experimental film, emphasizing atmosphere over literal storytelling.

Beatviz aligns naturally with this trend. Its visual outputs often feel closer to abstract performance pieces than traditional narrative videos. This quality makes it especially relevant for stage artists exploring digital backdrops, projections, or hybrid live-media performances.

Imagine a live concert or theatrical production where visuals evolve in real time, responding to rhythm and intensity. While Beatviz is not a live performance engine, its approach to music-driven visuals points toward this future—where AI music video generators become part of the performance ecosystem rather than a post-production afterthought.

AI as a Creative Mirror, Not a Replacement

One of the most common concerns surrounding AI in the arts is authorship. If an algorithm generates visuals, who is the artist?

Beatviz subtly challenges this fear by positioning AI as a mirror. The system does not invent emotion on its own; it reflects patterns already present in the music. The creative act remains with the human—choosing the music, interpreting the output, refining the result, and deciding what resonates.

In this sense, Beatviz resembles earlier technological shifts in art history. Editing software did not eliminate film editors. Synthesizers did not replace musicians. Instead, these tools expanded the vocabulary of creative expression. The AI music video generator may represent a similar evolution—one that changes how artists think, not what they feel.

Expanding Access Without Diluting Art

Another important dimension is accessibility. High-quality music videos and visual experiments were once reserved for artists with budgets and production teams. AI tools lower this barrier, allowing independent filmmakers, composers, and stage artists to experiment visually without large resources.

The risk, of course, is homogenization. When tools prioritize presets and trends, art can become repetitive. Beatviz avoids this trap by focusing on music-driven variation rather than fixed styles. Because each piece of music generates a different visual response, the results remain personal and context-dependent.

For emerging artists, this balance between access and individuality is crucial. The AI music video generator becomes a starting point—not a final destination.

Looking Ahead: The Future of Visual Music Storytelling

As film, theater, and music continue to intersect, tools like Beatviz hint at a future where visual storytelling begins with sound rather than image. Directors may design scenes around musical structure. Stage designers may choreograph light and space based on rhythm data. Music videos may evolve into immersive, adaptive experiences.

In this future, the question will no longer be whether AI belongs in the arts, but how thoughtfully it is used. Beatviz suggests that when AI listens carefully—to rhythm, to structure, to emotion—it can enhance artistic intent rather than dilute it.

The AI music video generator, in this light, is not about speed or spectacle. It is about seeing music more clearly—and giving artists new ways to let audiences feel it.

Search Articles

Please help keep

Stage and Cinema going!